Around the world, a lot of people have lost their jobs during the pandemic. In parallel, automation, AI, and the usage of robots are becoming more and more frequent. Indeed, the health situation has increased firms’ digitalization and automatization processes. In the past, when automation has eliminated jobs, companies created new ones to meet their needs. This, however, is no longer the case. Indeed, as automation lets companies do more with fewer people, successful companies do not need as many workers. As pointed out by Semuels (2020), in 1964 the most valuable company in the U.S. was AT&T with 758,611 employees. The most valuable company today is Apple, which has around 137,000 employees.

Around the world, a lot of people have lost their jobs during the pandemic. In parallel, automation, AI, and the usage of robots are becoming more and more frequent. Indeed, the health situation has increased firms’ digitalization and automatization processes. In the past, when automation has eliminated jobs, companies created new ones to meet their needs. This, however, is no longer the case. Indeed, as automation lets companies do more with fewer people, successful companies do not need as many workers. As pointed out by Semuels (2020), in 1964 the most valuable company in the U.S. was AT&T with 758,611 employees. The most valuable company today is Apple, which has around 137,000 employees.

The service sector has always been a laboratory for innovation, as it is an inflection point between productivity and personalization. In this matter, technologies such as AI, cloud computing and data banks have been implemented to revolutionize the future of the industry. Robotics, of course, is also a newcomer in the service sector. Robots used in this field are called “service robots”.

Service robot research into ethical issues

The goal of our research was to focus on the ethical issues linked to the interaction between humans and robots in a service delivery context. We want to see how ethics influence users’ intention to use a robot in a frontline service context.

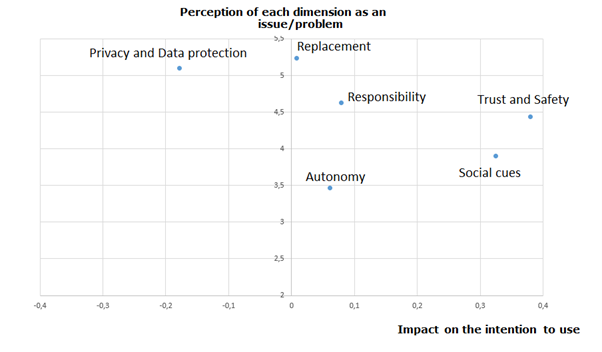

On Figure 1, the vertical axis represents the mean for each ethical issue and the horizontal axis represents the impact of the ethical issues on the “intention to use”. Focusing first on the vertical axis, we observed that the most important ethical issue is the “replacement and its implications for labor” (i.e., I think robots in a service delivery context will cut employment) with an average of 5.25 on a Likert scale from 1 to 7. The second dimension is the “privacy and data protection” (i.e., I mind giving personal information to a robot in a service delivery context) with an average of 5.09. The third most worrying dimension is “responsibility” (i.e., I think the law, and subsequent punishment, should apply to robots in a service delivery context) with an average of 4.62. The value of means for “trust and safety”, “social cues”, and “autonomy” are respectively 4.43, 3.9, and 3.46.

When we measure the impact of each ethical issue on the intention to use the robot in the future, we observe that “trust and safety” (i.e., I perceive robots as safe in a service delivery context) is the most impacting variable on the decision whether to use the robot. The second variable impacting the intention to use a service delivery robot is “social cues” (i.e., I perceive robots as social actors in a service delivery context). The third variable negatively impacting the intention to use the robot is “privacy and data protection”.

6 main ethical concerns of robots in the service industry

In order to optimize the use of robots, we advise companies to heed the following ethical concerns:

- Social cues: According to our findings, the more a robot displays social cues, the higher the user’s intention to use it will be. Therefore, robots should deliver a service that is as human-like as possible and, thus, include social features. However, a customer service robot should not hinder or replace human-to-human interactions. It is important to guarantee this aspect when a company wants to use robots in a service delivery context.

- Trust and safety: The extent to which a robot is deemed safe and trustworthy is important to the user’s intention to use the technology. Although it can be argued that designers and producers are responsible for creating robots that are safe for users, companies using a service robot must always guarantee this major dimension.

- Autonomy: Even though, in our case, this variable did not have an influence on the user’s intention to use a robot, the idea of being able to restrict a robot’s autonomy can be found in ethical charters. Therefore, we argue that a company using a service robot should always be able to regulate a robot’s autonomy, especially in cases when the consequences of the robot’s actions cannot be totally controlled.

- Responsibility: A robot’s responsibility for its actions is important for the user’s intention to use the technology. Therefore, companies using a service robot should pay attention to this point and clearly define, before the deployment of their robot, who is responsible for the robot’s actions. Moreover, due to liability concerns, a robot’s actions and decisions must always be traceable.

- Privacy and data protection: Privacy and data protection play a big role in the intention to use a robot. First, a company using a service robot should always respect its customers’ right to privacy. As transparency (i.e., disclosure about what, how and why data is collected) leads to a better user experience, we advise companies (and their robots) to be transparent about the collection and use of their customers’ data. Secondly, companies using customer service robots should ensure that they protect their customer’s data by encrypting and safeguarding such data. Third, companies should always make sure that the robot’s data collection complies with official guidelines and local laws. Finally, several legal and regulatory questions have to be considered when physical robotic systems are integrated into cloud-based services.

- Human worker replacement: Although this variable was not found to be important in our model, best practices in relation to the subject can be established. A company should incorporate its employees in the choices and decisions related to the service robot, such as the choice of the robot, or the decisions related to the definition of its tasks. If a robot takes a worker’s job, the firm should retrain its employee for a new occupation.

Many companies are in the midst of the digital transformation process with a view to reducing their costs, creating an original customer experience or increasing productivity. The challenge is to find a win-win balance where the company reduces its costs, adapts the activities of its employees, and at the same time the customer feels the added value in the use of this kind of technology. The societal impact will be significant over the next few years and these ethical questions will be at the heart of reflections in this area.

About the author

Dr Reza Etemad-Sajadi is Associate Dean for Faculty Affairs and Associate Professor at EHL.